Recap escribió:I hear you on the KOF XII subject. I'm still to see how the game works with the system set at 480p and a 31-kHz monitor, though it can't do the background graphics any good, so I'm not holding my breath. What a waste...

What a PURE WASTE ! The most important things, on which you have your eyes every seconds, are the sprites. You can't take time to notice every little details in the background, while being able to perfectly know that the background has lot of detail (your eyes can't analyse every detail individualy, but can percieve the fact that there are lot of informations globaly).

And more importantly, you can play the game that way since the system (either, the 3-60 or the Type X 2) does support 480p. Your samples are great and necessary for showing everybody that KOF XII's graphics are ruined by lame design decisions and a full-of-shit technology

Yeah, they support 480p, but not with the maximum quality (ie: non filtred display).

The graphics of KOF XII are ruined first because of lack of 2D technology. Since many years, no hardware can display sprites anymore, everything is "flat 3D". That means lack of power and memory where 2D graphics need it.

I'm pretty sure SNK-P really began the sprites at 720p (like BlazBlue). But they quickly realised it wasn't possible to achieve the quality they wanted (lots of shades and frames). So they reduced the size (half the size), and relied on filtred shitty tricks.

When I saw Ryo's face for the first time (I expect it was one of the early fighter they started with), with a "missing line" for his mouth (as it can occur with 50% nearest neighbor resizing), I immediatly thought it.

If the sprite have been genuinely designed at 360p, the graphist would not have skipped this special line.

KOF sprites are great, even if they are only "360p". That wouldn't have been a problem if there were multi-sync large 16/9 CRTs in arcades (and at consumer level). But instead, we jump from 4/3 CRT to HD flat screens.

If KOF XII would have come in a nice 16/9 cab' at 24 Khz, everybody would have notice the gorgeous graphics, with lots of vibrant colors, great shadow and lightning effects, smooth animation... Resolution isn't every thing in a quality picture. But for many years, people are brainwashed to believe that resolution is the most important thing. They want so hardly flat screens with high number of pixels, but shitty screens that LOST their sharpness as soon as something moves (because cells are to slow to react fast enough to follow the animation). They want screens with "crystal clear picture", but screens that are 99% of time feeded with non-native signals (blurry picture). Well, I already talked about that...

and hopefully you'll create with them some short of a virtual museum in order to let the internet see and take notes, but... just that.

I expect more than that... ^^'

I expect hacked dash boards that allow non filtred downscaling, and other resolutions (853x480 for example).

Man, on this fucking generation of hardware, almost nothing is done at the native resolutions of HD standards. And when it's done, the graphics are less sophisticated than those done at "sub-HD internal res".

I do really wonder if there's not a true 768-lines mode on this game. Blaz Blue has it, and Type X 2's most common mode is WXGA, given the arcade monitor standards.

BlazBlue is 1280x768. WXGA is 1366x768. Even on the original hardware in a brand new cab, it is fuckin' upscaled and filtred (at least, only in the horizontal direction)... But anyway, BlazBlue sprites are always displayed at 1:1 pixel mapping, weither the height of the screen is 720 (for 1280x720 panels) or 768. The back grounds are in 3D, so it's easy to resize.

KOF is "pure" 2D. If you could set the game at 768p, that would mean that the backgrounds are cropped when you see them at 720p. But no, there do not exist extra pixels beyond 720p. The window of the graphics is 1280x720, what ever screen will display it. Since there are barely flat screen at this exact resolution, you will always have your "crystal clear" picture (:P) upscaled and filtred, in association with the internal blurring of the sprites... Aw shit... where is my hammer ? ]:)

I'm sure there'll be an option to remove the filter (at least for home versions), but in the end, same issue as in XII -- you can't play the game and please your eyes at the same time.

I don't think so. For KOF XII, since the sprite have an exactly 200% upscaling (round number, constant pixel size), they considered the option of disabling the filters, but for KOF XIII, if you display the sprites without filtring them, they will look fuckin ugly with enormous jaggies. They will look uglier than those unfiltred in KOF XII.

The only correct way to display the game without filtred or jaggy sprites is to set the resolution at 853x480 (and have a display that can resolve this resolution without artifact... Some old plasmas, and of course CRTs).

The truth is that I love the slight 'melting' with bright red lines and whatnot. I'm so used to it that I like to think of it as a particularity of CRT displays (and indeed I miss it in your photographs). But I can understand that, while it may help the overall visual enjoyment depending on your tastes, it goes against pure 'pixel analysis', yeah.

I understand that, but when the ultimate goal will be reached (perfect CRT simulation), you can have shitload of options to mess the screen (adding misconvergence errors, set-up the screen out of focus, overdrive the red gun etc.). But FIRST, it's important to achieve the perfect thing, the top, so after that, you can easily "go down".

If you consider bad set-up as the main thing, it won't be possible to go beyond that... And that's why most people interested on this difficult subject are all wrong, and can hardly propose something decent...

(well, by now, some dude might have reached some good point, but they still lack the essential knowledge of CRT, and are still far from the perfection).

Looking forward to it, though you need too big resolutions for that and you know web design requirements... Nevertheless, your ultimate goal is solving to some degree the emulation-related issue, isn't it?

The goal is to solve the emulation matter, and offer a scaling algorithm that allows developper to continue to create pixel art in the fixed resolution HD era.

Beyond that, it could even help to play old video games on modern screen (because CRTs won't last forever...) with top quality visual and with almost inexisting lag (about 1/60 second, something barely noticeable, and way better than any scan-converter available now,).

About web design requirements, I'm working on it, but even the large resolution you propose (about 1280x960) is barely enough to show something accurate. Even the best picture I take from a real CRT can't show enough detail when reduce around 960p, either one way or the other...

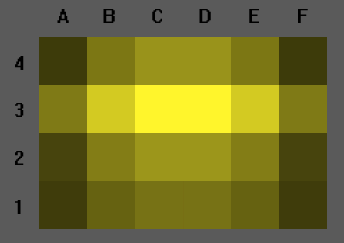

The best I can reach by now is that:

http://raster.effect.free.fr/tv/Photos_ … na_02f.jpg

Note that this picture is taken from a low curvature Trinitron (no cheating, it's a real old 29" low-res tube, not a computer one! :P ), from 3m from the screen. The curvature seems very low at this distance, and the focus of the picture is good even for corners.

Each time I try to go under that, the picture shows lots of artifacts ([>] like this...).

I can reduce that with a little bit of horizontal blurr, but anyway, the picture will always loose the smallest details of the surface of the CRT screen (i.e. the phosphore layout).

Damn, do you realise it ? All those fuckin "High Resolution crystal clear" screens of today can't show the magnificence of a low-res picture tube at one time... :P :P :P

The best way is to provide full shots with a necessary lack of phoshore layout (something that seems too much "clean" to you), and propose shots of small parts of the action. Something that can shows the greatness of pixel art displayed by CRT, like [>] this or [>] this, maybe...